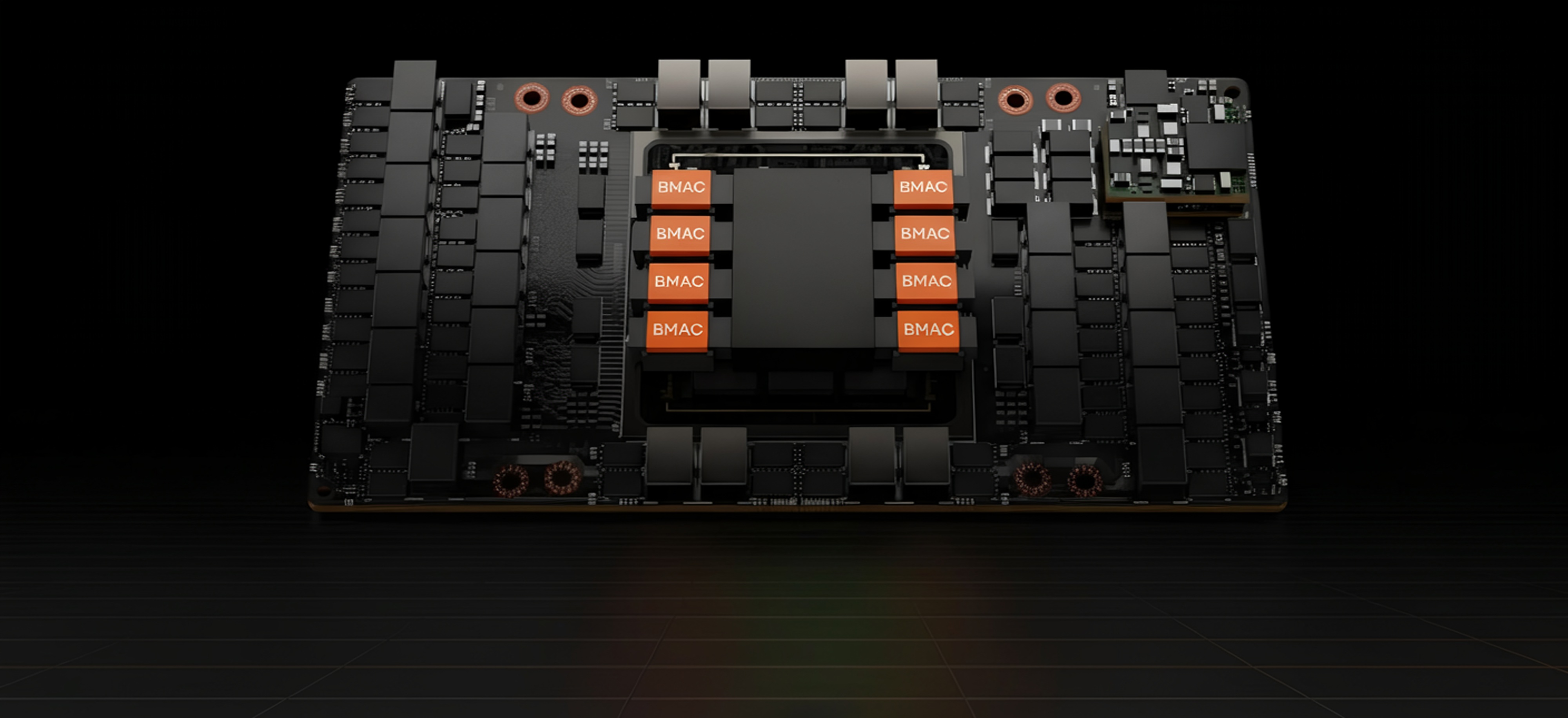

Inference Hardware for Every Scale

AI Inference in it's own discipline.

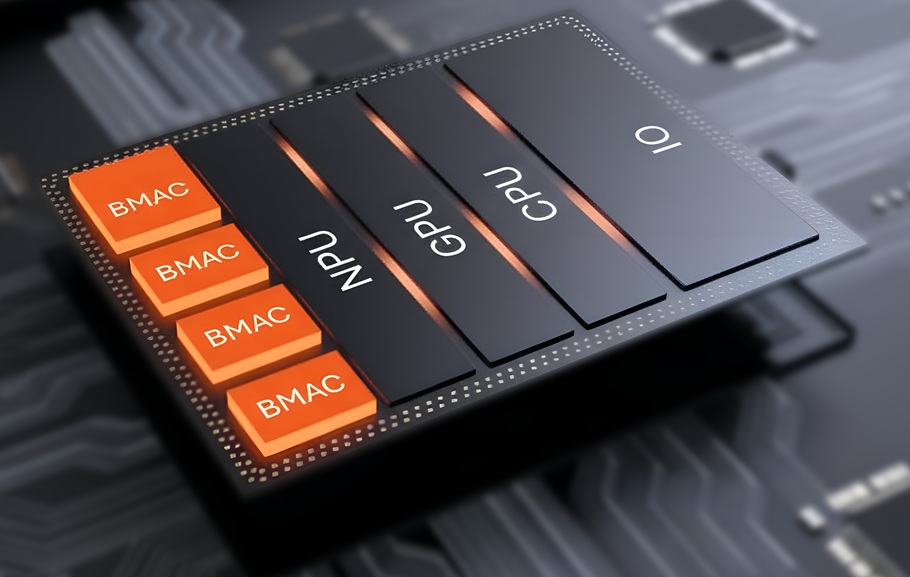

BMAC is built specifically for inference workloads.

Deterministic Execution

Deployment Density

Architectural Efficiency

Integration Flexibility

Designed for Inference Execution

- Eliminate dependence on HBM-centric memory systems.

- Eliminates heavy memory movement during inference.

- Support for diverse model sizes and model types.

One Architecture. Multiple Markets.

Cloud Infrastructure

Rackable inference accelerators that deploy into existing datacenter environments without exotic rebuilds.

OEM / ODM Integration

Dedicated modules for integration into server platforms and custom hardware systems.

Device and Mobile

Design access for smartphone and edge silicon developers.

Defense, Confidential and Autonomous computing

Inference hardware for secure, confidential, and autonomous computing environments.

Design Focus

Deterministic inference execution

Deployment versatility

Flexible integration pathways

Eliminate dependency on external memory bandwidth